DeepSeek Drops Full R1 Tech Report, Training Path Revealed

DeepSeek R1 now 32x cheaper: NVIDIA slashes inference cost with Blackwell GPUs and TensorRT-LLM

“AI Disruption” Publication 8500 Subscriptions 20% Discount Offer Link.

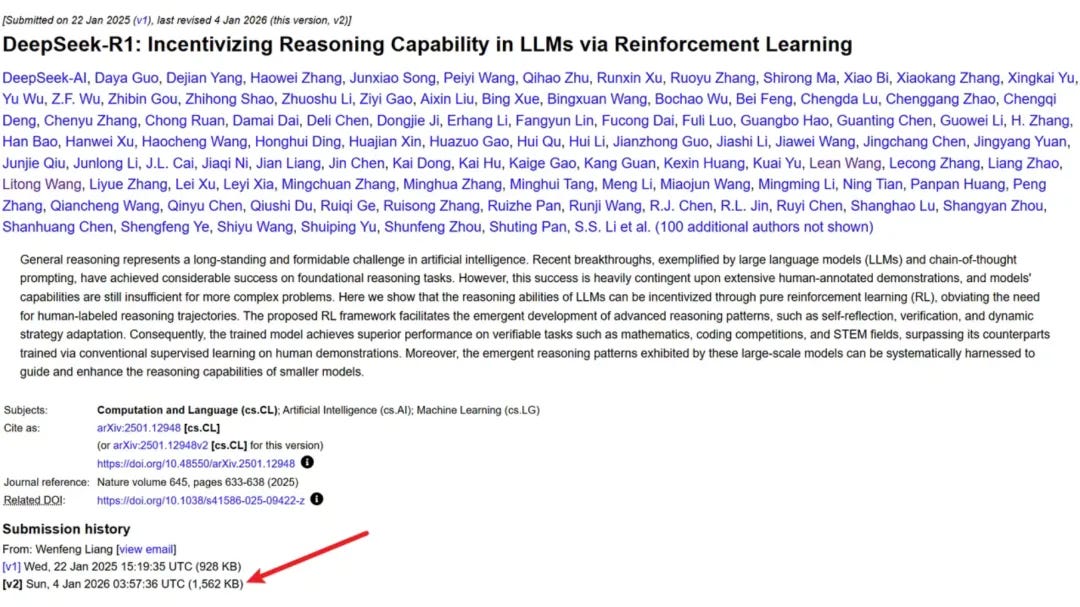

A few days ago, DeepSeek unexpectedly updated its R1 paper, expanding it from the original 22 pages to the current 86 pages.

The new version includes more detailed content, including the first public disclosure of the complete training pipeline—a four-stage process from cold start, guided RL training, rejection sampling, and re-finetuning, to full-scenario aligned RL—as well as quantitative validation of the “Aha Moment,” among other details.

DeepSeek-R1 is an open-source reasoning large language model released on January 20, 2025. It has 671 billion parameters, with 37 billion activated parameters per token, and adopts an MoE architecture, significantly improving training efficiency.

The release of R1 last year shook the global AI field, and its high-efficiency model architecture, training methods, engineering optimizations, and distillation methods have since become industry-wide trends.

Surprisingly, less than a year later, the cost per token for the R1 model has been reduced to 1/32!

Today, NVIDIA published a lengthy blog post demonstrating how it has further reduced costs and improved efficiency for DeepSeek-R1 on Blackwell GPUs through hardware-software co-design.