Claude Code Auto Mode: Full AI Control, Zero Risk

Claude Code Auto Mode: AI handles permissions, blocks risky ops, cuts approval fatigue.

“AI Disruption” Publication 9200 Subscriptions 20% Discount Offer Link.

Claude Code by default requests user confirmation before executing commands or modifying files. However, data shows that users approved 93% of these requests.

When there are too many prompts, people become numb to them.

This is what is known as “approval fatigue”—users gradually stop carefully reading what they are actually approving.

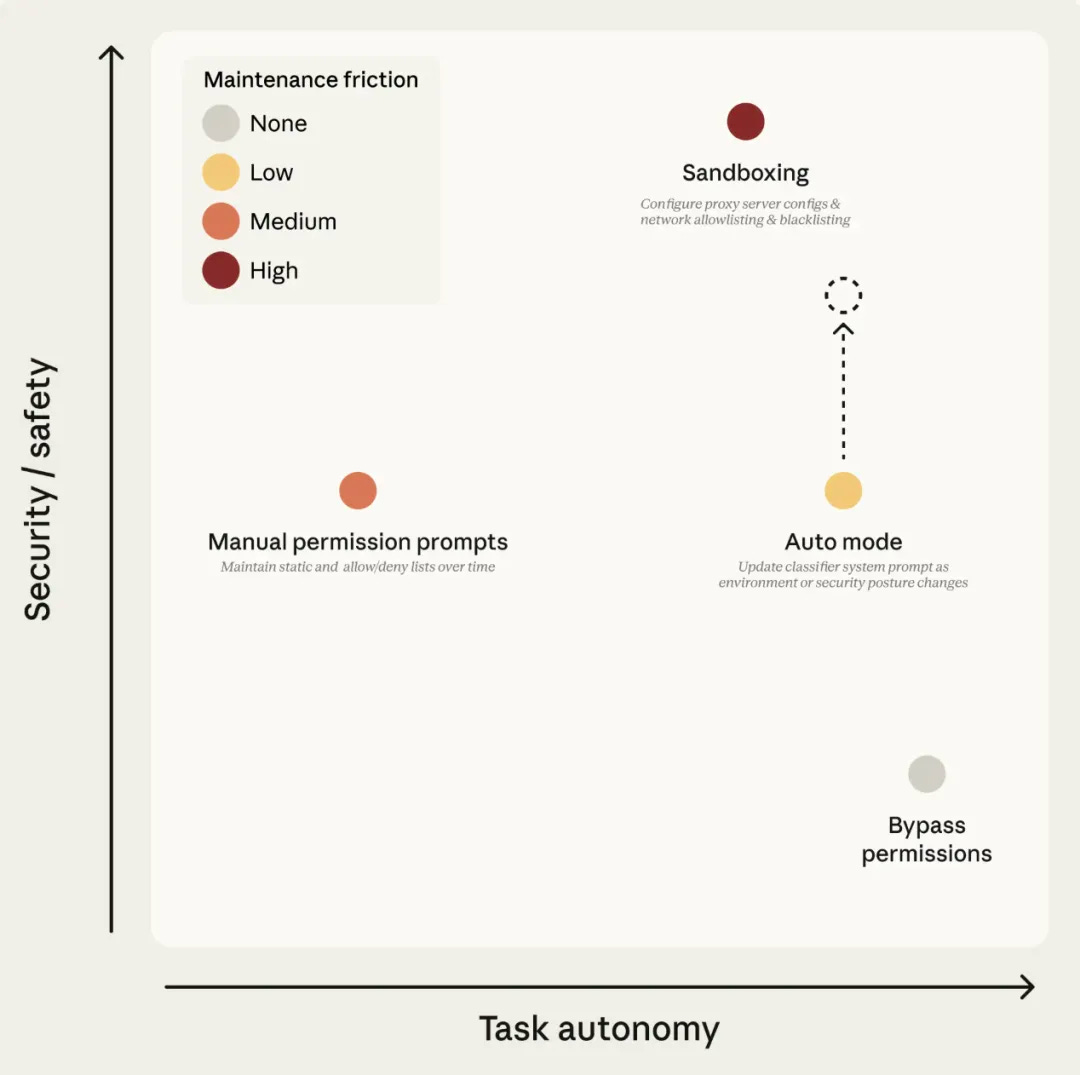

To bypass this fatigue, users previously had two options:

First, sandbox mode, which isolates tools in a restricted environment. It’s safe, but requires ongoing maintenance. Every time a new capability is added, the sandbox must be reconfigured. Once network access or host machine access is involved, the isolation is broken.

Second, directly using the --dangerously-skip-permissions flag to skip all permission prompts, allowing Claude to act freely. While convenient, it essentially provides no protection.

Now, Anthropic has introduced a third path: Auto Mode.